Hello! It’s Radio Day, which means we have a great reason to do something interesting. The other day my eyes fell on an SDR receiver gathering dust in the corner, and then it started to happen.

In general, spectrum painting, or drawing pictures on an SDR spectrogram, is a rather old and simple pastime: we take a picture, run each line through the inverse Fourier transform, and line by line we send the resulting signal to the air. For example, finished project on Github, which immediately generates an output file for HackRF or BladeRF. But, let’s say, I don’t have HackRF or anything similar, but I want to transfer the picture, but somehow simpler. How to be?

In principle, one could use a simple laboratory generator or DDS, but usually their output buffer does not exceed several kilobytes, while video can easily take up tens of megabytes. Wait, but we already have an excellent device at hand for playing long files – a computer audio output! All that remains is to take a radio frequency carrier generator (in my case it is 27 MHz) and modulate it by recording from a computer on a radio mixer.

A mixer is a non-linear element with two inputs and one output that adds or subtracts input frequencies from each other. When we want to listen to radio on 101.6 MHz on a pocket receiver, it sets the local oscillator frequency to 101.5 MHz and subtracts it from the carrier using a mixer, lowering the frequency to 100 kHz and completing demodulation at it. We will do the same thing, only in the other direction: we will add 27 MHz of the carrier to the 10-20 kHz of the audio signal in which the picture is encoded. In the language of SDR spectrograms, this means that the picture will simply shift up in frequency by 27 MHz.

The simplest mixer can be assembled from diodes. But I was lazy, and I took some ready-made one from MiniCircuits.

The white box is the mixer. To his left is a Red Pitaya as a carrier generator.

This is where the nuances begin. The first is that the audio card only outputs down to 20 kHz, and industrial mixers are not optimized for such low frequencies. To obtain an acceptable output signal, you will have to amplify the input signals, which will inevitably lead to nonlinearities – in other words, the generation of sum and difference frequencies from the audio signal itself:

This limits the operating range from 10 to 20 kHz: above 20 kHz the audio card does not work, below 10 kHz a difference signal from audio frequencies appears (we will see it later).

The second nuance is that the Fourier transform is complex, and not only intensities, but also phases play a significant role in the frequency and temporal representation of the signal. In practice, most often they work not with complex numbers, but with IQ representation in two orthogonal quadratures rotated by 90 degrees. For example, an SDR receiver has two mixers that mix a signal with a local oscillator frequency rotated by 90 degrees:

And the HackRF transmitter contains a MAX2839 chip, which mixes the I and Q components of the signal with two orthogonal carriers on two mixers, sums their output signals and sends them further to the antenna path:

Our craft has only one mixer, which means we transmit the signal in only one quadrature, that is, without taking into account phases. The Fourier transform of such a signal is symmetrical, which means that in addition to the treasured image to the right of the carrier, we will also see its mirror replica to the left of the carrier, and we will lose half the intensity. It’s not terrible, but it will weaken the signal a little.

Let’s move on to calculations. Let’s transmit the image with a standard bitrate of 44,100 samples per second (Professor Kotelnikov reminds us that for 20 kHz we won’t need more), and we will transmit one line of the image in 2048 pixels. Because of the aforementioned non-linear effects and the mirror cue, we can only use a quarter of them, but in general it’s better to make the picture even smaller. Let it be 256 pixels.

That’s basically it. The code is very similar to spectrum painter

import numpy as np

import scipy

import imageio.v2 as img

filename="img.png"

sr = 44100 # Hz

nfft = 2048

start_pix = nfft-256-1-256 # from which the image will be embedded

# Load the image

pic = img.imread(filename)

# Repeat each given line given amount of times

ffts = np.flipud(pic[:,:,0])**2

# Embed image starting from the given pixel

fftall = np.zeros((ffts.shape[0], nfft))

fftall[:, start_pix:(start_pix+pic.shape[1])] = ffts

# Generate random phase vectors for the FFT bins

# This is important to prevent high peaks in the output

phases = 2*np.pi*np.random.rand(*fftall.shape)

rffts = fftall * np.exp(1j*phases)

# Perform the FFT per image line

ifft = np.fft.ifft(np.fft.ifftshift(rffts, axes=1), axis=1) / np.sqrt(float(nfft))

# Concatenate lines to form the final signal, take one quadrature, and normalize

signal = normalize(np.real(ifft.flatten()))

signal_norm = np.float32(signal / max(signal.max(), -signal.min()))

# Write the signal to a wav file

scipy.io.wavfile.write(f'out.wav', sr, signal_norm)So, is it time to launch Doom? But no: Doom is launched by programmers. Engineers launch Bad Apple!

As I said above, a real signal without an imaginary component gives a small mirror image relative to the carrier, exactly as with amplitude modulation. Although here it turns out to be noticeably dimmer, probably due to nonlinearities. For the same reason, noise appears near the carrier (at the frequency differences from the picture) and above 22 kHz (at their second harmonic).

Looks good, but I sped up this video a lot. In fact, it takes almost 8 seconds to transfer one frame! Is it possible to make something more similar to a real video? At least five frames per second? Let’s count.

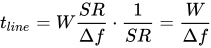

Does it make sense to increase the sampling rate (S.R.) transmitter? It determines the maximum width of the spectrum, while our image occupies the width Δf. With an image width of W pixels, the total spectrum width will be

After the Fourier transform, the number of points will remain the same, which means it will take

Yeah, that is, the time it takes to transmit one line does not depend on the sampling rate at all! This means there is no point in increasing it. Time to transmit the entire frame size W X H will be

It turns out that for T = 0.2 s (5 frames per second) and Δf = 20 – 10 kHz = 10 kHz one frame cannot exceed about 40 x 40 pixels. In principle, we can already work with this.

And finally, the most important question: is it really possible watch the video on SDR, and not just rewind the film? Why not: for example, you can insert synchronization pulses into the spectrum before the start of each frame and write a simple interface for the receiver that will recognize them. Or you can just take another program to work with SDR. For example, SDAngel draws a spectrogram a specified number of times per second:

All that remains is to choose the sampling rate so that we see exactly 5 frames per second. My frame size is 51×32 pixels, so a sample length of 256 (a little more than 51×4) is enough for the Fourier transform. In this case, the sampling rate will be 256 x 32 x 5 = 40,960 samples per second.

Well, shall we try?

Happy Radio Day! Everyone is 73!

Acknowledgement and Usage Notice

The editorial team at TechBurst Magazine acknowledges the invaluable contribution of the author of the original article that forms the foundation of our publication. We sincerely appreciate the author’s work. All images in this publication are sourced directly from the original article, where a reference to the author’s profile is provided as well. This publication respects the author’s rights and enhances the visibility of their original work. If there are any concerns or the author wishes to discuss this matter further, we welcome an open dialogue to address potential issues and find an amicable resolution. Feel free to contact us through the ‘Contact Us’ section; the link is available in the website footer.