Why did I even do this?

The short answer is YouTube recommendations.

In general, I came across a video from t3ssel8r And I really liked the drawing style and decided to do something similar out of motivation.

What do we work with?

I’ve been very attracted to the Godot game engine lately, so we’ll be working with it.

Advantages:

-

The engine is quite simple

-

Excellent documentation

-

A bunch of sample projects

-

You can assemble a project for almost anything

Disadvantages:

-

The latest major version is still young, i.e. we expect bugs

-

Lack of features compared to UE4/5 and Unity

-

Different features on different backends

Task

In order to correctly understand what to do and how to do it, you need to understand what needs the final result should meet

General idea:

-

Have a pixel-art view:

-

Highlight Faces Looking at the Camera

-

Darken Object Boundaries

-

Low resolution

-

-

Should work in an HTML5 build

In principle, the requirements are not so complicated, let’s see if anyone has done something similar before us. Of course I did! It’s not nuclear physics, after all.

Exactly what I need has already been done before me!

But there is one problem: it uses the Forward+ rendering backend, which gives access to the normal buffer, which is actively used by the shader.

So what’s the problem? This buffer is not initialized when building for HTML5.

But without it, it’s not possible to illuminate the edges looking at the camera, so what do we do?

Normal Reconstruction

In general, I didn’t come up with this term right away, but after the following chain of queries:

“Godot compatibility renderer normal buffer” – Conclusion: the buffer is not initialized in compatibility rendering mode (HTML5);

“What buffers Godot uses in compatibility renderer” – Conclusion: in addition to the color buffer, Godot creates a depth buffer in all rendering modes;

“Godot reconstruct normals from depth” – I didn’t find any examples of such techniques being used in Godot, but we got to a key word pair that helped me find the right resource;

In order for you to understand what I am going to talk about next, let’s go through the basic knowledge.

-

Normal is perpendicular to the plane.

-

A unique plane can be defined by three points.

-

A plane can also be defined by an equation of the form

And we can compute the normal to any plane using the

or

Where is

– Points on the plane. But the normal obtained in this way will in most cases have a length of != 1, and for practical purposes we need a normal of length 1, so we pass the result through the function

.

So, “Normal reconstruction”:

-

First link – Improved normal reconstruction from depth. The general idea is to compute the normals from the positions of the central and surrounding pixels, and then calculate the mean. Since the author then reduced the resolution of the image, there were almost no artifacts, but this is not exactly the option that I need because I need a normal buffer of the same size as the color buffer.

-

Second link – Accurate Normal Reconstruction from Depth Buffer. Very good article with a wonderful explanation. There are even examples, we’ll see…

I was looking for copper, but I found gold. This is great! This is exactly what I was looking for! It’s time to transfer this to Godot, and explain how the different methods presented work along the way.

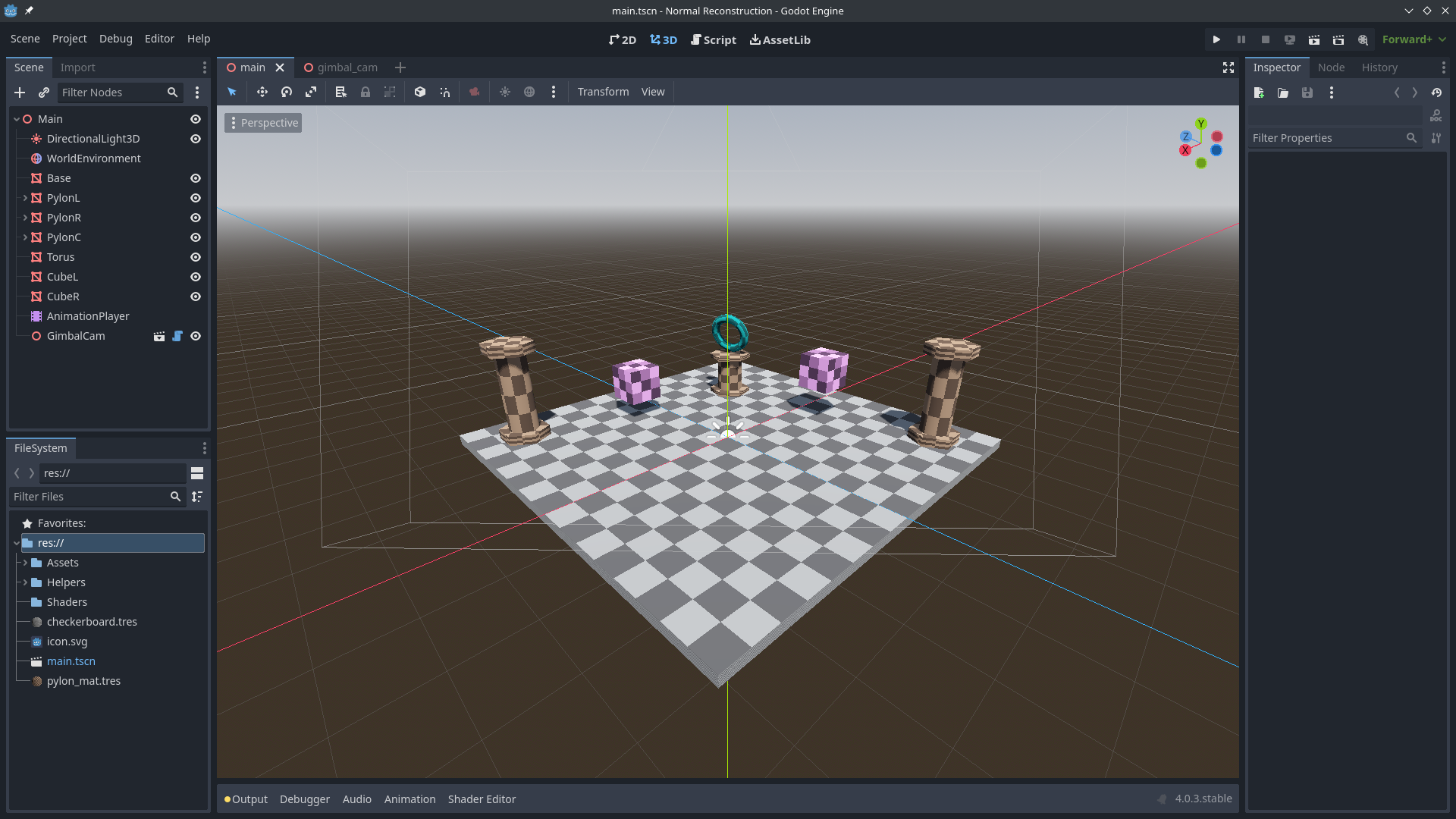

First, let’s create a scene on which we will test our shaders:

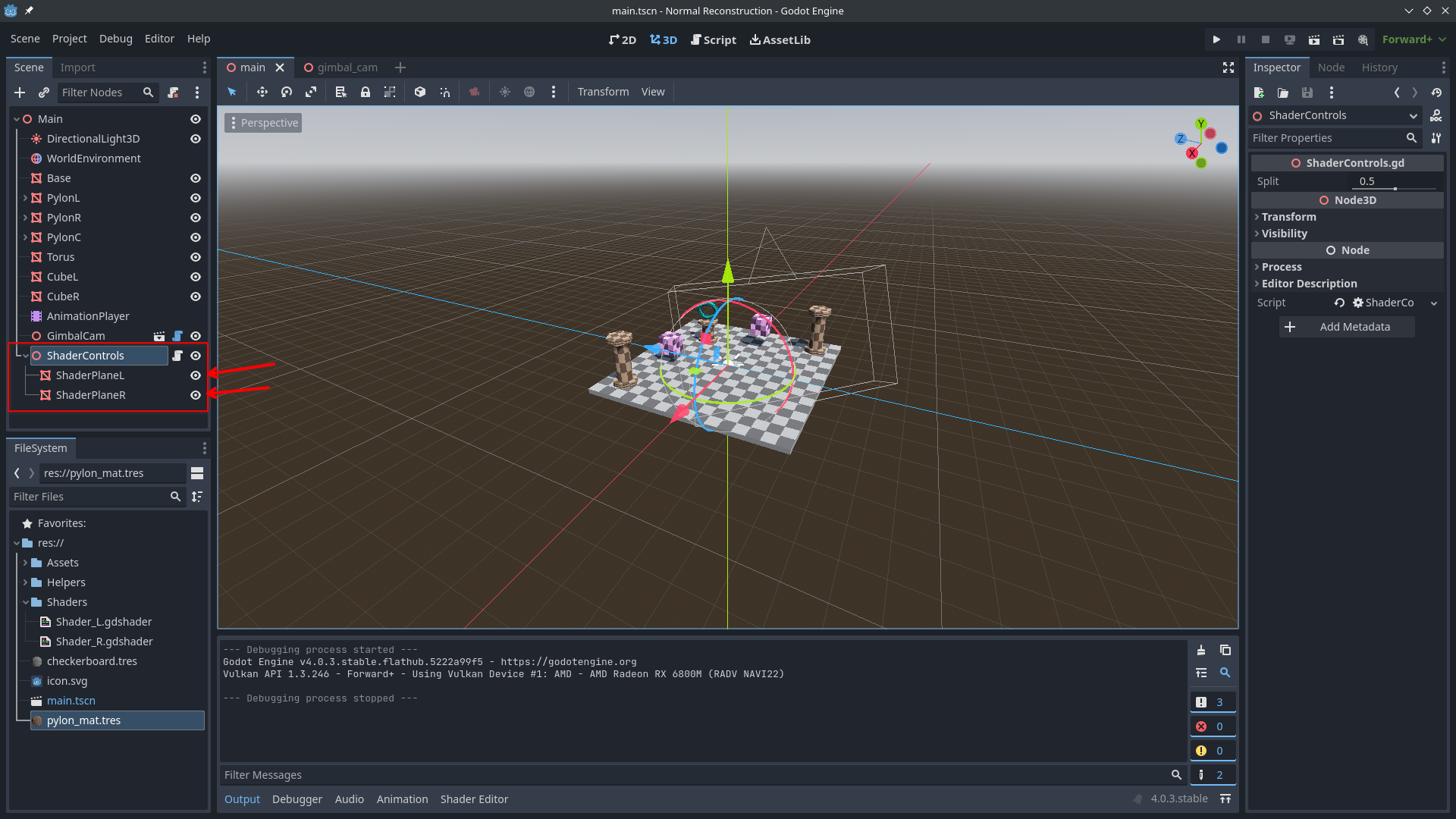

Now let’s create two planes, which we will use the shader to stretch to full screen:

While we’re still using the Forward+ rendering method, let’s make the left side of the screen display the true normals for the sake of validation and clarity.

shader_type spatial;

render_mode unshaded,depth_draw_never;

uniform sampler2D normals : hint_normal_roughness_texture;

void vertex() {

POSITION = vec4(VERTEX.xy,0.0,1.0);

}

void fragment() {

ALBEDO = texture(normals,SCREEN_UV).rgb;

ALPHA = 1.0;

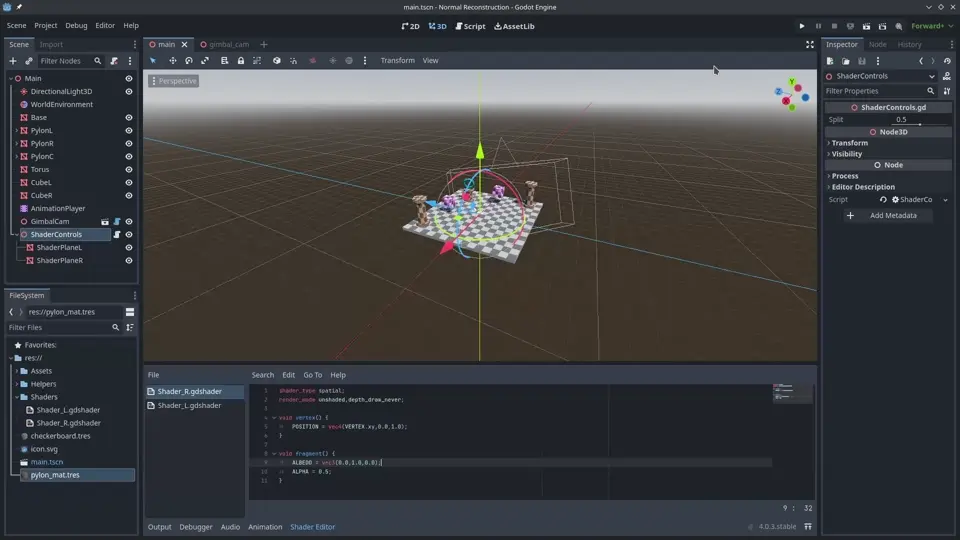

}And the right one is slightly tinted green

shader_type spatial;

render_mode unshaded,depth_draw_never;

void vertex() {

POSITION = vec4(VERTEX.xy,0.0,1.0);

}

void fragment() {

ALBEDO = vec3(0.0,1.0,0.0);

ALPHA = 0.5;

}It’s working!

Now you can start reconstructing the normals.

Common Features

This necessarily goes to the beginning of eachFor example, if you want to be able to get a shader, you

shader_type spatial;

render_mode unshaded,depth_draw_never;

uniform sampler2D depth_texture : hint_depth_texture,filter_nearest;

void vertex(){

POSITION = vec4(VERTEX.xy,0.0,1.0);

}In fact, it just puts vertices directly in NDC (x(-1..=1),y(-1..=1),z(0..=1)) skipping coordinate transformations

vec3 viewPosDepth(float depth,vec2 uv,mat4 ipm){

vec3 ndc = vec3(uv*2.0 - 1.0,depth);

vec4 view = ipm * vec4(ndc,1.0);

view.xyz /= view.w;

return view.xyz;

}

vec3 viewPosSampler(sampler2D depth_tex,vec2 uv,mat4 ipm){

float depth = texture(depth_tex,uv).x;

return viewPosDepth(depth,uv,ipm);

}Converts coordinates from normalized space (NDC) to view space coordinates, i.e. coordinates relative to the camera

Method No. 1 Simple 3 tap

...

vec3 NR_3tap(vec2 uv,vec2 el,mat4 ipm,sampler2D depth_tex){

float depth_c = texture(depth_tex,uv).x;

//ранний выход если глубина слишком высока

if (depth_c == 1.0){

return vec3(0.5);

}

vec3 view_c = viewPosDepth(depth_c,uv,ipm);

vec3 view_r = viewPosSampler(depth_tex,uv + vec2(1.0,0.0)*el,ipm);

vec3 view_u = viewPosSampler(depth_tex,uv + vec2(0.0,1.0)*el,ipm);

vec3 h_der = view_r - view_c;

vec3 v_der = view_u - view_c;

vec3 view_n = normalize(cross(v_der,h_der));

return (view_n+1.0)*0.5;

}

void fragment() {

vec2 uv = SCREEN_UV;

vec2 el = 1.0/VIEWPORT_SIZE;

mat4 ipm = INV_PROJECTION_MATRIX;

vec3 normal = NR_3tap(uv,el,ipm,depth_texture);

ALBEDO = normal;

}The general idea is as follows:

The green dot is the coordinates of the pixel (SCREEN_UV)

We take its coordinates and the coordinates of the pixels on the right and bottom, from which the horizontal and vertical shift is calculated. Next, we simply find the normal from the received shifts.

And the result: on the left are the true normals, on the right are the reconstructed ones

You can’t really see the difference. Then just lower the resolution!

As you can see, there are significant artifacts on the edges of the objects, and there is no normal smoothing that you would expect from MeshInstance.

Method No2 Simple 4 tap

...

vec3 NR_4tap(vec2 uv,vec2 el,mat4 ipm,sampler2D depth_tex){

float depth_l = texture(depth_tex,uv - vec2(1.0,0.0)*el).x;

//early exit if on the end of view distance

if (depth_l == 1.0){

return vec3(0.5);

}

vec3 view_l = viewPosDepth(depth_l,uv - vec2(1.0,0.0)*el,ipm);

vec3 view_d = viewPosSampler(uv - vec2(0.0,1.0)*el,ipm,depth_tex);

vec3 view_r = viewPosSampler(uv + vec2(1.0,0.0)*el,ipm,depth_tex);

vec3 view_u = viewPosSampler(uv + vec2(0.0,1.0)*el,ipm,depth_tex);

vec3 h_der = view_r - view_l;

vec3 v_der = view_u - view_d;

vec3 view_n = normalize(cross(v_der,h_der));

return (view_n+1.0)*0.5;

}

...Same principle as in the previous method, but now compare the pixel at the bottom with the pixel at the top, not the center pixel. It’s the same with the pixel on the right.

And the result:

Even worse, but this was to be expected, since we are doing a “rougher” approximation in this case, completely skipping the pixel we are working with.

Method No3 Improved 5 tap

...

vec3 NR_5tap(vec2 uv,vec2 el,mat4 ipm,sampler2D depth_tex){

float depth_c = texture(depth_tex,uv).x;

//early exit if on the end of view distance

if (depth_c == 1.0){

return vec3(0.5);

}

vec3 view_c = viewPosDepth(depth_c,uv,ipm);

vec3 view_l = viewPosSampler(uv - vec2(1.0,0.0)*el,ipm,depth_tex);

vec3 view_d = viewPosSampler(uv - vec2(0.0,1.0)*el,ipm,depth_tex);

vec3 view_r = viewPosSampler(uv + vec2(1.0,0.0)*el,ipm,depth_tex);

vec3 view_u = viewPosSampler(uv + vec2(0.0,1.0)*el,ipm,depth_tex);

vec3 l = view_c - view_l;

vec3 r = view_r - view_c;

vec3 d = view_c - view_d;

vec3 u = view_u - view_c;

vec3 h_der = abs(l.z) < abs(r.z) ? l : r;

vec3 v_der = abs(d.z) < abs(u.z) ? d : u;

vec3 view_n = normalize(cross(v_der,h_der));

return (view_n+1.0)*0.5;

}

...In this method, we compute the difference in positions for each direction, while keeping the direction common to the axis (this is important for the cross() function).

Next, according to the difference in depths, choose the direction that is “closer” and calculate the normal from the “closest” horizontal and vertical:

As you can see, distortion is still present, but its number and visibility are extremely small.

Method No4 Accurate 9 tap

...

vec3 NR_9tap(vec2 uv,vec2 el,mat4 ipm,sampler2D depth_tex){

vec3 view_c = viewPosSampler(uv,ipm,depth_tex);

vec3 view_l = viewPosSampler(uv - vec2(1.0,0.0)*el,ipm,depth_tex);

vec3 view_r = viewPosSampler(uv + vec2(1.0,0.0)*el,ipm,depth_tex);

vec3 view_d = viewPosSampler(uv - vec2(0.0,1.0)*el,ipm,depth_tex);

vec3 view_u = viewPosSampler(uv + vec2(0.0,1.0)*el,ipm,depth_tex);

vec3 l = view_c - view_l;

vec3 r = view_r - view_c;

vec3 d = view_c - view_d;

vec3 u = view_u - view_c;

//deside from which direction to sample

//center depth

float depth_c = texture(depth_tex,uv).x;

//early exit if on the end of view distance

if (depth_c == 1.0){

return vec3(0.5);

}

//horizontal depths

vec4 H = vec4(

texture(depth_tex,uv - vec2(1.0,0.0)*el).x,

texture(depth_tex,uv - vec2(2.0,0.0)*el).x,

texture(depth_tex,uv + vec2(1.0,0.0)*el).x,

texture(depth_tex,uv + vec2(2.0,0.0)*el).x

);

//vertical depths

vec4 V = vec4(

texture(depth_tex,uv - vec2(0.0,1.0)*el).x,

texture(depth_tex,uv - vec2(0.0,2.0)*el).x,

texture(depth_tex,uv + vec2(0.0,1.0)*el).x,

texture(depth_tex,uv + vec2(0.0,2.0)*el).x

);

//find diff of true center and extrapolated one

vec2 he = abs((2.0*H.xz - H.yw) - depth_c);

vec2 ve = abs((2.0*V.xz - V.yw) - depth_c);

vec3 h_der = he.x < he.y ? l : r;

vec3 v_der = ve.x < ve.y ? d : u;

vec3 view_n = normalize(cross(v_der,h_der));

return (view_n+1.0)*0.5;

}

...This is exactly the method described in This Article. Its beginning is similar to the previous method, but now we determine which side to take by extrapolating the central depth:

-

Prolong

and get

-

Prolong

and get

-

If

that

is located on the

otherwise

is located on the

This method is almost perfect, artifacts exist only where the element size is less than 2 pixels, but it can be improved by reducing the number of conversions from NDC to view coordinates

Method No. 5 Method No. 4 Improved by Me

...

vec3 NR_9tap_plus(vec2 uv,vec2 el,mat4 ipm,sampler2D depth_tex){

//center depth

float depth_c = texture(depth_tex,uv).x;

//early exit if on the end of view distance

if (depth_c == 1.0){

return vec3(0.5);

}

//horizontal depths

vec4 H = vec4(

texture(depth_tex,uv - vec2(1.0,0.0)*el).x,

texture(depth_tex,uv - vec2(2.0,0.0)*el).x,

texture(depth_tex,uv + vec2(1.0,0.0)*el).x,

texture(depth_tex,uv + vec2(2.0,0.0)*el).x

);

//vertical depths

vec4 V = vec4(

texture(depth_tex,uv - vec2(0.0,1.0)*el).x,

texture(depth_tex,uv - vec2(0.0,2.0)*el).x,

texture(depth_tex,uv + vec2(0.0,1.0)*el).x,

texture(depth_tex,uv + vec2(0.0,2.0)*el).x

);

//find diff of true center and extrapolated one

vec2 he = abs((2.0*H.xz - H.yw) - depth_c);

vec2 ve = abs((2.0*V.xz - V.yw) - depth_c);

//from which direction to sample

float h_sign = he.x < he.y ? -1.0 : 1.0;

float v_sign = ve.x < ve.y ? -1.0 : 1.0;

vec3 view_h = viewPosDepth(H[1 + int(h_sign)],uv + vec2(h_sign,0.0)*el,ipm);

vec3 view_v = viewPosDepth(V[1 + int(v_sign)],uv + vec2(0.0,v_sign)*el,ipm);

vec3 view_c = viewPosDepth(depth_c,uv,ipm);

vec3 h_der = h_sign*(view_h - view_c);

vec3 v_der = v_sign*(view_v - view_c);

vec3 view_n = normalize(cross(v_der,h_der));

return (view_n+1.0)*0.5;

}

...

While the changes don’t look major, they do remove 5 extra depth buffer requests and 2 translations from NDC to view coordinates. Visually, it’s no different from method 4, but it reduces frame time by 10% on my integrated graphics card, so I consider it a success.

Build for HTML5

In Compatibility rendering mode, Godot uses different NDCs than in the Forward+ mode we’ve worked with so far, so we need to update the features that depend on NDC:

void vertex(){

POSITION = vec4(VERTEX.xy,-1.0,1.0);

}

vec3 viewPosDepth(float depth,vec2 uv,mat4 ipm){

vec3 ndc = vec3(uv,depth)*2.0 - 1.0;

vec4 view = ipm * vec4(ndc,1.0);

view.xyz /= view.w;

return view.xyz;

}

Difference in depth parameter:

-

In Forward+ z: 0..=1

-

In Compatibility z: -1..=1

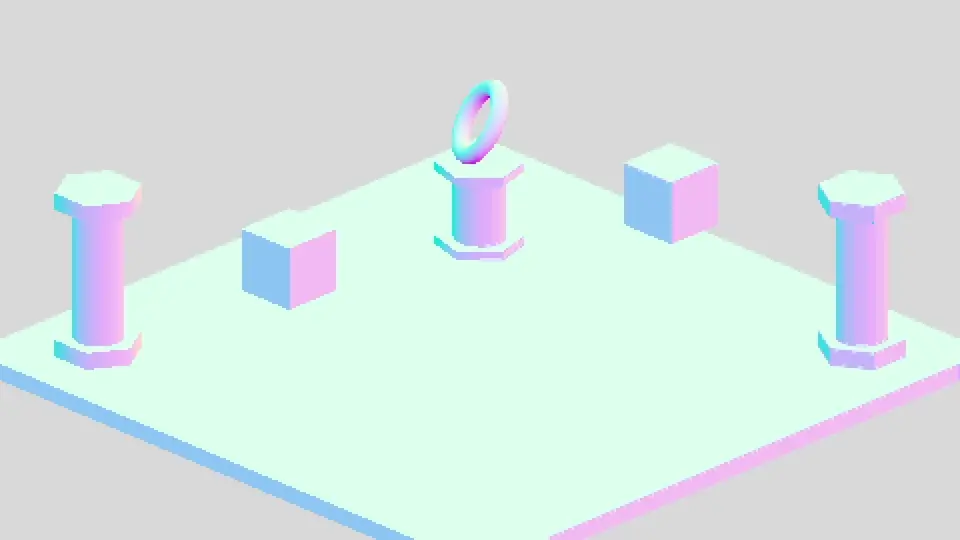

Final Result

After a lot of tinkering with the interface, I’ve made a mini project that you can look at on this web demo.

I hope you enjoyed my first article (can this book be called that at all?), if you are interested, you can take a look at Source code of the project.

———-

Acknowledgment and Usage Notice

The editorial team at TechBurst Magazine acknowledges the invaluable contribution of the author of the original article that forms the foundation of our publication. We sincerely appreciate the author’s work. All images in this publication are sourced directly from the original article, where a reference to the author’s profile is provided as well. This publication respects the author’s rights and enhances the visibility of their original work. If there are any concerns or the author wishes to discuss this matter further, we welcome an open dialogue to address potential issues and find an amicable resolution. Feel free to contact us through the ‘Contact Us’ section; the link is available in the website footer.